Last time, we saw how to develop a custom augmentation to be used in an Augraphy pipeline. In that post, we created a bare-bones pipeline which only ran our new augmentation.

The Augraphy package already includes a default pipeline containing every augmentation currently in the project, but there are reasons you might want to define your own. In this post, we’ll see how to create a custom pipeline, first by choosing the augmentations we want, then determining the sequence of their application, and later setting the probability of each augmentation’s execution.

At the end, we’ll use our custom pipeline to generate a large set of inputs for machine learning training.

Getting Started

To work on your local machine, clone the project directory and have a look around; we’ll be working primarily in augraphy/src/augraphy/augmentations.

Not ready to build locally? You can work in this Colab notebook, but be sure to open another browser window so you can follow along with this guide.

In any case, we’ll want to import the AugraphyPipeline class from augraphy.base.augmentationpipeline:

from augraphy.base.augmentationpipeline import AugraphyPipeline

Motivation

When building a machine learning model, using a wide range of training data helps improve the model’s accuracy in classification and prediction tasks. Augraphy is designed to facilitate generating significant variations in a small set of input images, turning a few examples into thousands.

The key to producing these differences in such large numbers is to combine a set of augmentations, each with a separate probability of transforming the input images. In Augraphy, we do this with a data structure called a pipeline, which contains several layers, or phases, of augmentations to apply to different parts of the image.

Choosing Augmentations

The choice of which augmentations to use in your custom pipeline comes down to which features you’re trying to train your model to handle, and this is dependent on your unique situation. Without knowing your use-case, we can’t tell you exactly what you’ll need in a pipeline, but there are some general heuristics you can follow to design your own. Of course, you can also contact us for a consultation.

An Example Scenario

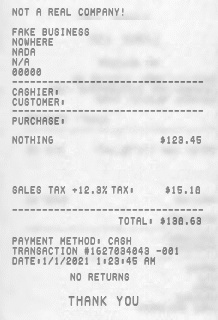

Maybe you have millions of receipts printed at point-of-sale units, and they’ve all been sitting for 20 years in a storage unit without climate control. Before they went into storage, they were hastily crammed into a drawer, or perhaps carried around in someone’s pocket, suffering some physical deformation. Most of these ink on these receipts is fading, and the original print wasn’t designed to be sharp and crisp anyway. You’ll need to build a pipeline that produces outputs that are visually similar to the physical papers you’ll be working with.

To help us coordinate our approach, let’s use an example image of some receipts:

Decomposing the Goal

Let’s break our target result up into component phases and effects, which we can address with some of our existing augmentations.

As mentioned before, an Augraphy pipeline contains multiple phases, each of which corresponds to a different layer of the output image. In general, we perform transformations on the text itself within the ink phase, on the texture of the underlying paper in the paper phase, and on the “printed” document within the post phase. Keep these in mind when deciding how to organize your custom pipeline.

The Ink Phase

Our documents were originally printed years ago on low-quality paper, by low-quality printers, so the characters weren’t printed cleanly in the first place. These aren’t the smooth lines created your modern office printers.

We’ll need an augmentation (or multiple) to add problems like scratches and dust, incomplete contact by the print-head, over-application of ink, and lines where the print failed. The “BleedThrough” and “LowInkRandomLines” augmentations should help us here.

The Paper Phase

From the time these receipts were first printed to the time we opened the storage unit, our documents have been suffering various forms of damage.

We’ll use an augmentation to generate this effect in the paper, so we can print some of the documents onto paper with non-uniform texture. The “NoiseTexturize” augmentation will let us change the texture of the paper, and we can find some pictures of paper online to feed into Augraphy’s “PaperFactory” for additional variation.

The Post Phase

Variable heat in the storage unit has caused the black of the ink to fade somewhat over the intervening years. Some of the receipts were initially kept in wallets where they gained new textures from the friction of walking around. Standard 3″ receipts are taller than paper bills, so they’re often folded to fit into wallets anyway, or undergo ad-hoc folding in the pocket.

Once we have a “fresh” document with crisp black ink printed on our multi-textured paper, we’ll apply more augmentations to simulate the fading of the whole document from wear over time, and these other kinds of physical damage. We can probably use the “GaussianBlur”, “Jpeg”, and “Folding” augmentations to achieve this result. This is also a good place to throw in the “DirtyDrum” augmentation, which simulates a printer with – you guessed it – a dirty print drum, leaving streaks on the print.

Assembling the Pipeline

We’ll import all of the classes providing these augmentations, as well as the AugmentationSequence class we need to combine them. The “GaussianBlur” augmentation can be applied in any layer, so it takes an extra argument telling it where it is.

# ink phase

from augraphy.augmentations.bleedthrough import BleedThroughAugmentation

from augraphy.augmentations.dirtydrum import DirtyDrumAugmentation

from augraphy.augmentations.lowinkrandomlines import LowInkRandomLinesAugmentation

# paper phase

from augraphy.augmentations.noisetexturize import NoiseTexturizeAugmentation

from augraphy.base.paperfactory import PaperFactory

# post phase

from augraphy.augmentations.gaussianblur import GaussianBlurAugmentation

from augraphy.augmentations.jpeg import JpegAugmentation

from augraphy.augmentations.folding import FoldingAugmentation

# structural classes

from augraphy.base.augmentationsequence import AugmentationSequence

Now we’ll just define the different phases as sequences of these augmentations.

ink_phase = AugmentationSequence([

BleedThroughAugmentation(),

LowInkRandomLinesAugmentation()

])

paper_phase = AugmentationSequence([

PaperFactory(),

NoiseTexturizeAugmentation()

])

post_phase = AugmentationSequence([

GaussianBlurAugmentation("post"),

JpegAugmentation(),

DirtyDrumAugmentation(),

FoldingAugmentation()

])

To construct our pipeline, we need only pass these phases in as arguments to the constructor:

pipeline = AugraphyPipeline(ink_phase, paper_phase, post_phase)

We’re already most of the way there, but we have a few more pieces to consider.

Extra Paper Textures

We said before that we’ll find some more pictures of paper online to add to the differences in our output images. For demonstration purposes, we’ll use two that came up in a Bing images search for “wrinkled paper”, filtered by images with a public domain license. Feel free to use your own.

We’ll save these into the paper_textures folder in the top level of the repository, but you could pick any other location; just make sure to pass an updated texture_path argument to the PaperFactory constructor, like this:

PaperFactory(texture_path="/path/to/my/paper_textures")

A Note on Probability

Mathematically, the probability of two independent events occurring (in our case, two separate augmentations being applied) is the product of their individual probabilities. In our case, we have 8 augmentations to apply; if we give each of these a 50% chance of running (the default for Augraphy augmentations), an output image will have a probability of

0.5 * 0.5 * 0.5 * 0.5 * 0.5 * 0.5 * 0.5 * 0.5 = 0.00390625

or a 1/256th chance to have all augmentations applied to it.

Of course, if these augmentations did the same thing every time, we still wouldn’t be able to generate enough differences. All of the augmentations in the Augraphy package internally use random number generation to add additional randomness. Calculating these probabilities is left as an exercise for the reader.

We do want to make sure that every phase actually runs, however, so we’ll need to amend our script to set a probability of 1 for each of the AugmentationSequence objects.

We’re ready to finally assemble our pipeline and synthesize some training data.

Generating a Dataset

The Input

To start us off, we’ll need an input image to serve as the base receipt. I used Fakereceipt.us to generate this, which I’ve named receipt.png in the directory we’ll run our script from:

The Final Pipeline

In addition to the probability changes, we need to add a little more code to do the following:

- import

cv2 - read in our input image

- pass the input into the pipeline

- display the result of one pipeline run

After adding these pieces, you should have something like this:

# ink phase

from augraphy.augmentations.bleedthrough import BleedThroughAugmentation

from augraphy.augmentations.dirtydrum import DirtyDrumAugmentation

from augraphy.augmentations.lowinkrandomlines import LowInkRandomLinesAugmentation

# paper phase

from augraphy.augmentations.noisetexturize import NoiseTexturizeAugmentation

from augraphy.base.paperfactory import PaperFactory

# post phase

from augraphy.augmentations.gaussianblur import GaussianBlurAugmentation

from augraphy.augmentations.jpeg import JpegAugmentation

from augraphy.augmentations.folding import FoldingAugmentation

# structural classes

from augraphy.base.augmentationsequence import AugmentationSequence

from augraphy.base.augmentationpipeline import AugraphyPipeline

# reading and displaying images

import cv2

ink_phase = AugmentationSequence([

BleedThroughAugmentation(),

LowInkRandomLinesAugmentation()

], p=1)

paper_phase = AugmentationSequence([

PaperFactory(),

NoiseTexturizeAugmentation()

], p=1)

post_phase = AugmentationSequence([

GaussianBlurAugmentation("post"),

JpegAugmentation(),

DirtyDrumAugmentation(),

FoldingAugmentation()

], p=1)

pipeline = AugraphyPipeline(ink_phase, paper_phase, post_phase)

image = cv2.imread("receipt.png")

oldreceipt = pipeline.augment(image)

cv2.imshow("generated-receipt", oldreceipt["output"])

cv2.waitKey(0)

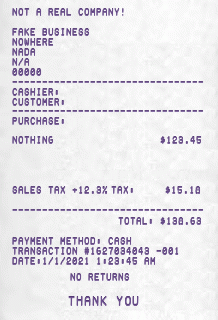

Here’s the first result:

Not bad! When this was printed, the printer drum was clearly dirty, and it looks like part of the receipt was folded over in a wallet, then smoothed out to be scanned.

We are missing some of the other effects we wanted to see, though. As noted before in the aside about probability, this is because every augmentation only has a 50% chance of running in a given pipeline execution. We can remedy the situation by generating many more images, expanding the range of variations in data our model will train on.

We’ll amend the single pipeline run to spawn many processes and execute the pipeline against a thousand images, saving them to the current folder. We’ll randomly generate a name for them, so they’re less likely to overwrite each other.

from multiprocessing import Pool

import random

image = cv2.imread("receipt.png")

images = [ image for i in range(1000) ] # 1000 copies of the image

def runPipeline(image):

oldreceipt = pipeline.augment(image)

cv2.imwrite(str(random.randint(0,100000)) + ".jpg", oldreceipt["output"])

p = Pool(32) # create 32 processes to run the pipeline in

p.map(runPipeline, images) # generate many old receipts from different pipeline runs

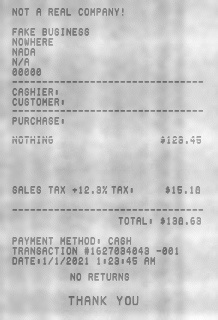

Now we have many more images to choose from, and we can see that the other effects have been applied.

Different paper texture and ink fading and blurring:

Text bleeding through from the reverse side of the image: