In the last post, we looked at a tool for generating realistic documents, to be used in machine learning training. This article introduces Augraphy, a Python library which solves the same problem while fitting easily into existing ML workflows in as few as 4 lines of code.

Problems, Solutions

The world has too many documents storing too much information. In a twist of irony, the solution is actually to add more documents!

Library of Babel

For the past several thousand years, physical artifacts have been produced to hold text. In the digital age, we’d like that information to be accessible and indexable by machines, but for that to happen, the text needs to be extracted from those artifacts by human or machine transcription. Human transcription is costly and slow even under the best of circumstances, and simply isn’t adequate for the vast amount of physical text out there.

Rise of the Machines

Enter optical character recognition. We can use OCR tools to recognize characters in an image and produce a string of such characters, which hopefully matches what was written on the physical thing. Nowadays, these tools frequently use machine learning algorithms internally, and so at some point need to be trained to read. We do that in a similar way to how you and I learned to read: someone shows us a lot of text (the training set) and tells us what it’s supposed to say (the ground-truth). Eventually we build a map in our minds of which shapes correspond to which meanings, and suddenly we’re literate.

Producing curated sets of training data is also quite difficult and time-consuming; however, to build these, a collection of suitable images must be compiled, and ground-truth associated, which often means more human transcription.

Recently, machine learning researchers and practitioners are turning to data augmentation techniques to alleviate this problem. These are methods which take a small number of ground-truthed images and produce additional images with substantial modifications, substantially increasing the quantity of data to train on.

Augraphy is a library for doing just this.

Lay of the Land

Several other data augmentation libraries exist, but with much broader scopes than Augraphy’s, and usually written with different applications in mind. The big 4 in this space are:

The first three of these libraries have a focus on general image augmentations, like the filters and transformations you might apply in your phone’s image editor. The last library is Facebook’s, and accordingly is designed around image transformations common to their social media platforms.

To find other augmentation libraries, this GitHub repository is a great resource.

DocCreator

Very few libraries exist that are specifically geared towards /document/ augmentation. DocCreator is one such project designed specifically for turning a small set of ground-truthed documents into a large set of semi-synthetic altered documents, suitable for evaluating or training algorithms for character recognition tasks. We covered some of the features of DocCreator and its use in the previous post.

Despite being an otherwise fantastic tool, DocCreator has some problems:

- It’s a large and complicated C++ application that isn’t particularly easy to extend by researchers or engineers.

- Because it is designed to be interactive, you really notice the computation it’s doing when using it.

- Fitting it into an existing machine learning workflow is not straightforward, as there are no Python bindings to allow seamless use with

numpyandpandas. - There is no scripting interface to allow automated document augmentation; DocCreator must be manually used.

- There are several frustrating bugs that make its use challenging.

How Augraphy Compares

We created Augraphy to fill these gaps. Augraphy is an easy to use library designed to enable users to rapidly produce complex document augmentation pipelines with a few lines of simple Python code, while providing an easily-extended package architecture for specialist use and development.

Compared to the issues-list above, Augraphy:

- is a relatively small Python package, using industry-standard coding style and architecture.

- is fast; running the default pipeline on a 500 pixel square image usually takes about a quarter of a second on modern hardware. I regularly use it to generate thousands of unique images in a minute or two.

- already depends on

numpy; all images are internally represented asnumpyarrays and users ofnumpyandpandaswill feel right at home - is already a Python package, and easily scripted

- is undergoing active use and bug-fixing all the time; if you see one, please let us know in the GitHub issues.

Ease of Use

Making Augraphy easy for anyone to use was a major focus of the development team. In fact, if you don’t want to define your own pipeline, we provide a prebuilt pipeline with sensible defaults, which can be used to produce training sets of realistic documents with a high degree of variation, without manual setup or intervention. All you need is a set of input images to run the pipeline on. If you wanted to, you could even use DocCreator to generate the ground-truthed documents and then pass them into Augraphy. (I did this to generate the pictures below).

Using the default Augraphy pipeline couldn’t be any easier. Assuming your input image is stored as a numpy array in the img variable:

from augraphy import default_augraphy_pipeline

pipeline = default_augraphy_pipeline()

augmented_image = pipeline.augment(img)

new_image = augmented_image["output"]

Key Features

Rather than just producing transformed documents, Augraphy’s pipeline allows you to inspect and alter the intermediate results it obtained along the way. This is extremely useful for debugging, but also gives you the flexibility to define new augmentations and new pipelines, should you wish to. A common problem with image augmentation libraries is the lack of transparency about what’s happening at each step, and we have handily solved that in Augraphy.

Augraphy’s Augmentations

Augraphy focuses on producing realistic document degradations, which look like real paper you might find out in the world. All Augraphy augmentations are designed to produce images of printed text on various types of paper media (newspaper, printer paper, etc), rather than general image transformations. Some of these are below.

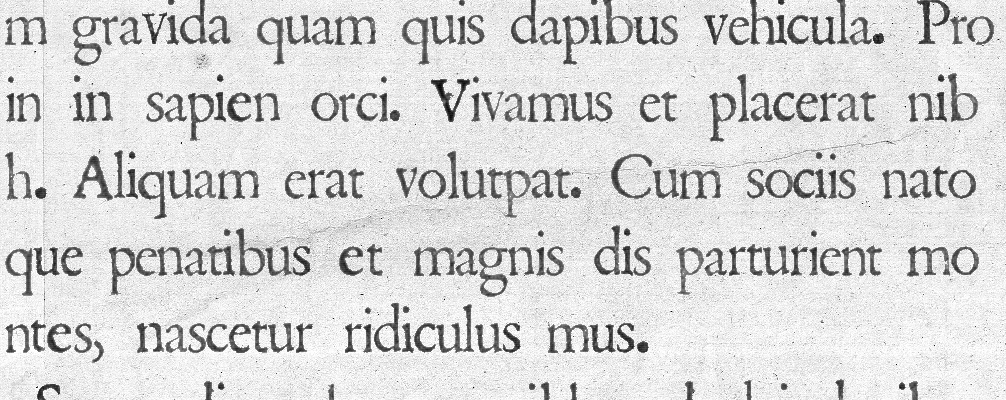

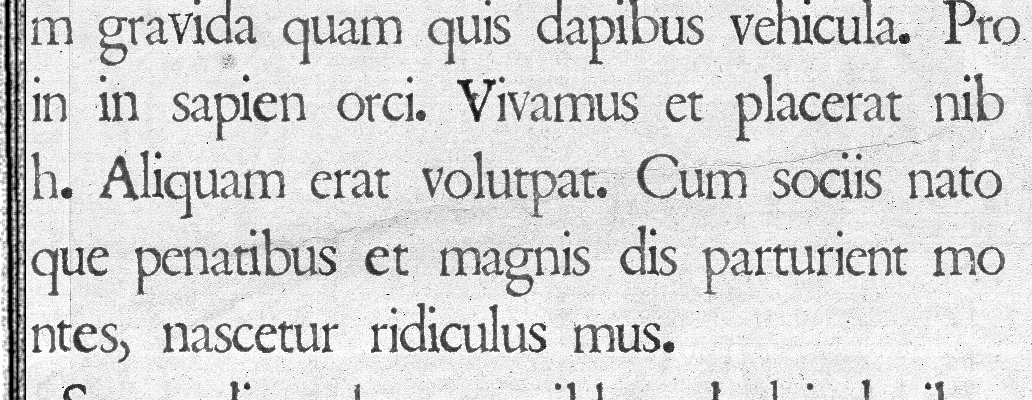

For reference, here’s the image I used as the base to show the effects below. I generated this image with DocCreator.

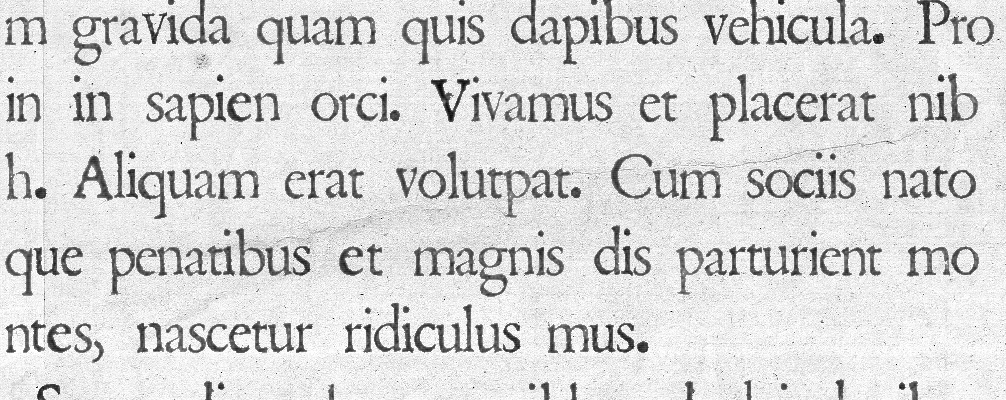

Letterpress

Printing presses often make uneven contact with the page, leaving regions with less or no ink.

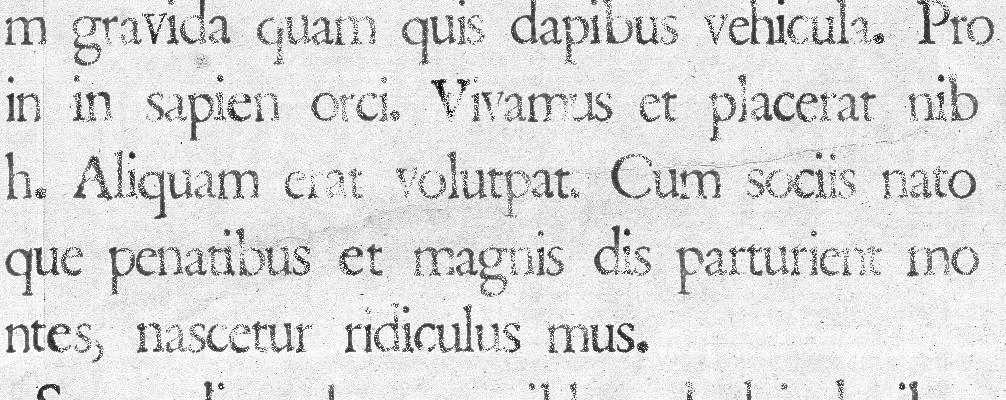

Dirty Rollers

Document scanners in some high-traffic environments sometimes accumulate dirt and ink dust, and leave streaks on the documents they scan.

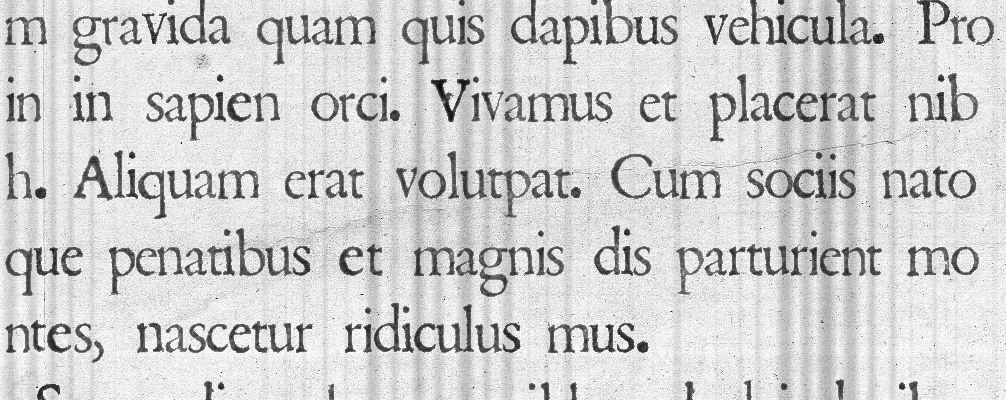

Page Borders

Pages that are scanned, copied, or faxed often aren’t perfectly aligned with the machine, or have incorrectly cropped borders.

Bleedthrough

Certain kinds of paper and printing techniques can cause ink to seep through the page and become visible on the reverse side.

Low Ink Periodic Lines

High-use printers often run out of ink, and this only becomes noticable when prints start to have blank streaks across the page.

Interoperability

You may want to use general image augmentations from other libraries within your Augraphy pipeline. If you need an augmentation from Albumentations or imgaug, for example, you can import those libraries in your code and pass those augmentations into the constructor for Augraphy’s ForeignAugmentation wrapper class.

Getting Started

You can install Augraphy with pip right now, or clone the project’s GitHub repository.

Detailed walkthroughs for building an augmentation and for defining custom pipelines are available on the Sparkfish blog. You can follow those guides and use the linked Google Colab notebooks to create and test your custom augmentation and pipeline.